Forward-looking: For a long time, most of the biggest innovations in semiconductors happened in client devices. The surge in processing power for smartphones, following the advancements in low-power CPUs and GPUs for notebooks, enabled the mobile-led computing world in which we now find ourselves. Recently, however, there's been a marked shift to chip innovation for servers, reflecting both a more competitive marketplace and an explosion in new types of computing architectures designed to accelerate different types of workloads, particularly AI and machine learning.

At this week's Hot Chips conference, this intense server focus for the semiconductor industry was on display in a number of ways. From the debut of the world's largest chip---the 1.2 trillion transistor 300mm wafer-sized AI accelerator from startup Cerebras Systems---to new developments in Arm's Neoverse N1 server-focused designs, to the latest iteration of IBM's Power CPU, to a keynote speech on server and high-performance compute innovation from AMD CEO Dr. Lisa Su, there was a multitude of innovations that highlighted the pace of change currently impacting the server market.

One of the biggest innovations that's expected to impact the server market is the release of AMD's line of second generation Epyc 7002 series server CPUs, which had been codenamed "Rome." At the launch event for the line earlier this month, as well as at Hot Chips, AMD highlighted the impressive capabilities of the new chips, including many world record performance numbers on both single and dual-socket server platforms.

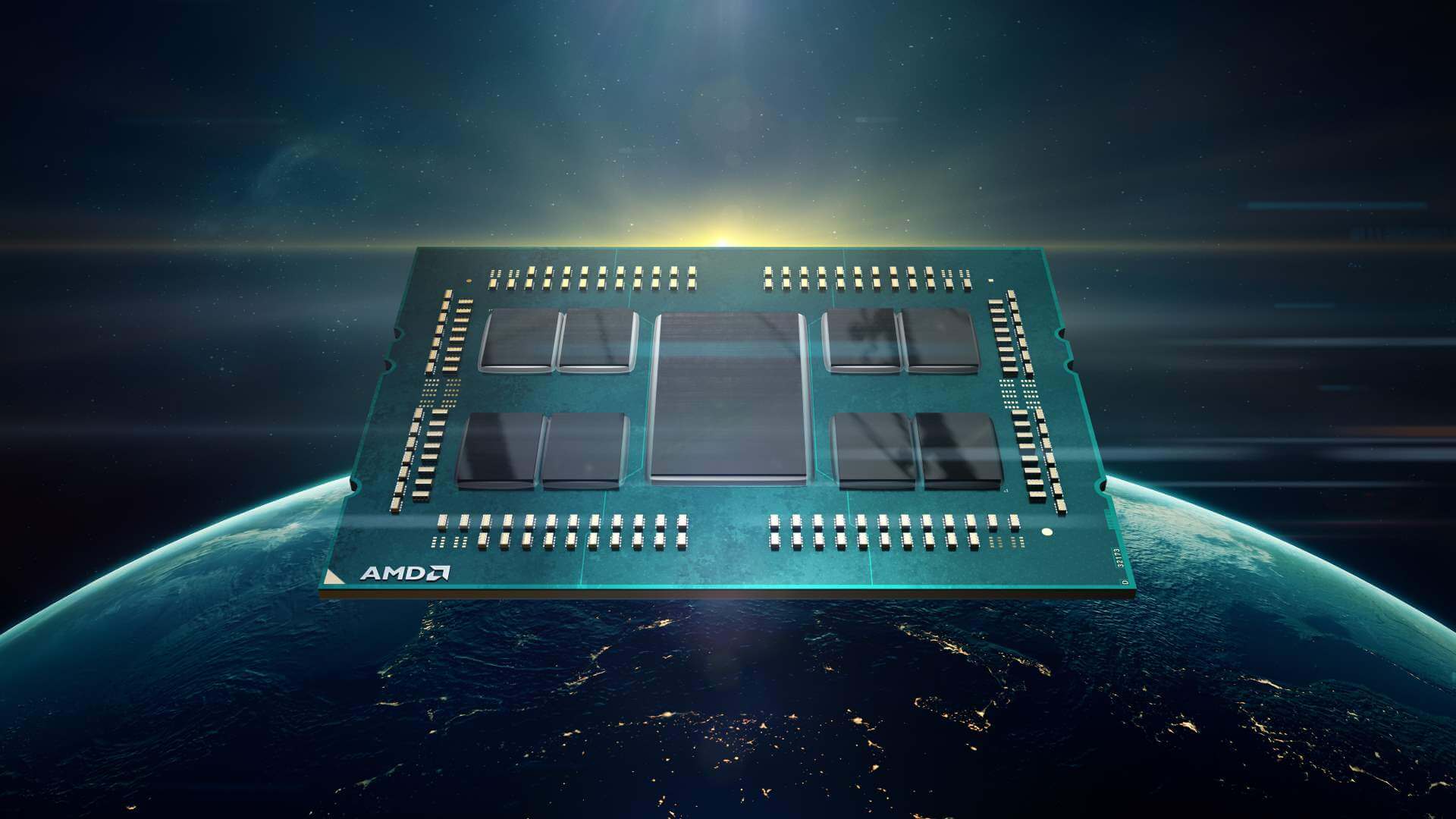

The Epyc 7002 uses the company's new Zen 2 microarchitecture and is the first server CPU built on a 7nm process technology and the first to leverage PCIe Gen 4 for connectivity. Like the company's latest Ryzen line of desktop CPUs, the new Epyc series is based on a chiplet design, with up to 8 separate CPU chips (each of which can host up to 8 cores), surrounding a single I/O die and connected together via the company's Infinity Fabric technology. It's a modern chip structure with an overall architecture that's expected to become the standard moving forward, as most companies start to move away from large monolithic designs to combinations of smaller dies built on multiple different process size nodes packaged together into an SoC (system on a chip).

The move to a 7nm manufacturing process for the new Epyc line, in particular, is seen as being a key advantage for AMD, as it allows the company to offer up to 2x the density, 1.25x the frequency at the same power, or ½ the power requirements at the same performance level as its previous generation designs. Toss in 15% instruction per clock performance increases as the result of Zen 2 microarchitecture changes and the end result is an impressive line of new CPUs that promise to bring much needed compute performance improvements to the cloud and many other enterprise-level workloads.

Equally important, the new Epyc line positions AMD more competitively against Intel in the server market than they have been for over 20 years. After decades of 95+% market share in servers, Intel is finally facing some serious competition and that, in turn, has led to a very dynamic market for server and high-performance computing---all of which promises to benefit companies and users of all types. It's a classic example of the benefits of a competitive market.

"The new Epyc line positions AMD more competitively against Intel in the server market than they have been for over 20 years."

The prospect of the competitive threat has also led Intel to make some important additions to its portfolio of computing architectures. For the last year or so, in particular, Intel has been talking about the capabilities of its Nervana acquisition and at Hot Chips, the company started talking in more detail about its forthcoming Nervana technology-powered Spring Crest line of AI accelerator cards, including the NNP-T and the NNP-I. In particular, the Intel Nervana NNP-T (Neural Networking Processor for Training) card features both a dedicated Nervana chip with 24 tensor cores, as well as an Intel Xeon Scalable CPU, and 32GB of HBM (High Bandwidth Memory). Interestingly, the onboard CPU is being leveraged to handle several functions, including managing the communications across the different elements on the card itself.

As part of its development process, Nervana determined that a number of the key challenges in training models for deep learning center on the need to have extremely fast access to large amounts of training data. As a result, the design of their chip focuses equally on compute (the matrix multiplication and other methods commonly used in AI training), memory (four banks of 8 GB HBM), and communications (both shuttling data across the chip and from chip-to-chip across multi-card implementations). On the software side, Intel initially announced native support for the cards with Google's TensorFlow and Baidu's PaddlePaddle AI frameworks but said more will come later this year.

AI accelerators, in general, are expected to be an extremely active area of development for the semiconductor business over the next several years, with much of the early focus directed towards server applications. At Hot Chips, for example, several other companies including Nvidia, Xilinx and Huawei also talked about work they were doing in the area of server-based AI accelerators.

Because much of what they do is hidden behind the walls of enterprise data centers and large cloud providers, server-focused chip advancements are generally little known and not well understood. But the kinds of advancements now happening in this area do impact all of us in many ways that we don't always recognize. Ultimately, the payoff for the work many of these companies are doing will show up in faster, more compelling cloud computing experiences across a number of different applications in the months and years to come.

Bob O'Donnell is the founder and chief analyst of TECHnalysis Research, LLC a technology consulting and market research firm. You can follow him on Twitter @bobodtech. This article was originally published on Tech.pinions.